Pop-up from Hell: On the growing opacity of web programs

I started to learn how to program in high school at the end of the 1990s using a mix of BASIC, Turbo Pascal and HTML with JavaScript. The seed for this blog post comes from my experience with learning how to program in JavaScript, without having much guidance or organized resources. This article continues a theme that I started in my interactive Commodore 64 article, which is to look at past programming systems and see what interesting past ideas have been lost in contemporary systems. Unlike with Commodore 64, which I first used in 2018 in the Seattle Living Computers museum, my perspective on the Early Web may be biased by personal experience. I will do my best to not make this post sound like a grumbling of an old nerd! (I thought this only comes later, but I may have been wrong...)

The 1990s, the web had a fair amount of quirky web pages, often created just for fun. The GeoCities hosting service, which has partly been archived is a witness of this and there are even academic books, such as Dot-Com Design documenting this history.

Some of the quirky things that you could do with JavaScript included creating roll-over effects (making an image change when mouse pointer is over it), creating an animation that follows the cursor as it moves and, of course, annoying the users with all sorts of pop-up windows for both entertaining and advertising purposes. Annoying pop-ups will be the starting point for my blog post, but I'll be using those to make a more general and interesting point about how programs evolve to become more opaque.

This blog post is based on a talk Popup from hell: Reflections on the most annoying 1990s program that I did recently at an (in person!) meeting of the PROGRAMme project. Thanks to everyone who attended for lively discussion and useful feedback!

Published: Friday, 8 October 2021, 1:14 PM

Tags:

academic, research, web, philosophy, talks

Read the complete article

Software designers, not engineers: An interview from alternative universe

While the physicists investigate the nature of the mysterious portal that has recently appeared in North London, several human beings recently came through the portal, which appears to be a gate into an alternative universe. As we understood from the last two people coming through the portal, it seems to be a linked with a universe that is in many ways like ours, reached about the same level of social and technological development, but differs in numerous curious details. The paths through which people in this alternative universe reached similar results as our world are often subtly different.

The most recent visitor from the alternative universe is Ms Zaha Atkinson, who would most likely be titled software engineer in our world, although the title she uses in her home world is software designer. She is a well-known software designer and has been also titled using the strange-sounding title softwarenova, a label that we will soon say more about. As with other technological and societal developments, the alternative universe seems to have arrived at very similar results as our worlds. Software is eating the (alternative) world, but it is built in very different ways. The interview with Ms Zaha Atkinson, presented below, reveals how very different the world of software is when we think of programmers as software designers rather than as software engineers.

This article is a work of fiction. Any resemblance to actual events or persons, living or dead, may or may not be entirely coincidental. It has been largely inspired by the book Designerly Ways of Knowing by Nigel Cross. Ms Zaha Atkinson also may or may not be entirely fictional.

Published: Monday, 19 April 2021, 2:30 PM

Tags:

academic, research, design, philosophy, architecture

Read the complete article

Is deep learning a new kind of programming? Operationalistic look at programming

In most discussions about how to make programming better, someone eventually says something along the lines of "we'll just have to wait until deep learning solves the problem!" I think this is a naively optimistic idea, but it raises one interesting question: In what sense are programs created using deep learning a different kind of programs than those written by hand?

This question recently arose in discussions that we have been having as part of the PROGRAMme project, which explores historical and philosophical perspectives on the question "What is a (computer) program?" and so this article owes much debt to others involved in the project, especially Maël Pégny, Liesbeth De Mol and Nick Wiggershaus.

Many people will intuitively think that, if you train a deep neural network to solve some a problem, you get a different kind of program than if you manually write some logic to solve the problem. But what exactly is the difference? In both cases, the program is a sequence of instructions that are deterministically executed by a machine, one after another, to produce the result.

When reading the excellent book Inventing Temperature by Hasok Chang recently, I came across the idea of operationalism, which I believe provides a useful perspective for thinking about the issue of deep learning and programming. The operationalist point of view was introduced by a physicist Percy Williams Bridgman. To quote: we mean by any concept nothing more than a set of operations; the concept is synonymous with the corresponding set of operations. What does this tell us about deep learning and programming?

Published: Wednesday, 7 October 2020, 2:43 AM

Tags:

programming languages, philosophy, research

Read the complete article

On architecture, urban planning and software construction

Despite having the term science in its name, it is not always clear what kind of discipline computer science actually is. Research on programming is sometimes like science, sometimes like mathematics, sometimes like engineering, sometimes like design and sometimes like art. It also has a long tradition of importing ideas from a wide range of other disciplines.

In this article, I will look at ideas from architecture and urban planning. Architecture has already been an inspiration for design patterns, although some would say that we did quite poor job and imported a trivialized (and not very useful) version of the idea. However, there are many other interesting ideas in architecture and urban planning worth exploring.

To explain why learning from architecture and urban planning is a good idea, I will first discuss similarities between problems solved by architects or urban planners and programmers. I will then look at a number of concrete ideas that we can learn, mostly taking inspiration from four books that I've read recently. There are two general areas:

-

First, writing about architecture and urban planning often uses interesting methodologies that research on programming could adopt to gain new insights into systems, programming and its problems.

-

Second, there are a number of more concrete ideas in architecture and urban planning that might directly apply to software. For example, can programmers learn how to deal with complexity of software by looking at how urban planners deal with the complexity of cities? Or, can we learn about software maintenance by looking at how buildings evolve in time?

The nature of problems that programmers face are often more similar to the problems that architects and urban planners have to deal with than, say, the problems that scientists, engineers or mathematicians need to solve. We might not want to go all the way and completely rebuild how we do programming to mirror architecture and urban planning, but treating the ideas from those disciplines as equal to those from science or engineering will make programming richer and more productive discipline.

Published: Wednesday, 8 April 2020, 12:13 AM

Tags:

academic, programming languages, philosophy, design, architecture

Read the complete article

What to teach as the first programming language and why

The number of Google search results for the phrase "choosing the first programming language" at the time of writing is 15,800. This illustrates just how debated the issue of choosing the first programming language is. In this blog post, I will not actually try to answer the question posed in the title of the post. I will not discuss what language we should teach as the first one. Instead, I will look at a more interesting question.

I will investigate the arguments that are used in favour of or against particular programming languages in computer science curriculum. I am more interested in the kind of argumentation that is employed to support a particular choice than in the specific languages involved. This approach is valuable for two reasons. First, by looking at the argumentation used, we can learn what educators consider important about computer science. Second, understanding the motivations behind different arguments allows us to make our own debates about the choice of a programming language more informed.

The scope of this blog post is limited to the choice of the first programming language taught in an undergraduate computer science programmes at universities. This means that I will not discuss other important contexts such as choices at a primary or a secondary education level, choices for independent learners and choices in other university degrees that might involve programming.

Note that this blog post is adapted from an essay that I wrote as part of a Postgrduate Certificate for Higher Education programme at University of Kent, so it assumes less knowledge about programming than a typical reader of my blog has. This makes it accessible to a broader audience thinking about education though!

Published: Monday, 2 December 2019, 5:48 PM

Tags:

functional, research, academic, programming languages, philosophy, writing

Read the complete article

What should a Software Engineering course look like?

When I joined the School of Computing at the University of Kent, I was asked what subjects I wanted to teach. One of the topics I chose was Software Engineering. I spent quite a lot of time reading about the history of software engineering when working on my paper on programming errors and I go to a fair number of professional programming conferences, so I thought I can come up with a good way of teaching it! Yet, I was not quite sure how to go about it or even what software engineering actually means.

In this blog post, I share my thought process on deciding what to cover in my Software Engineering module and also a rough list of topics. The introduction explaining why I chose these and how I structure them is perhaps more important than the list itself, but it is fairly long, so if you just want to see a list you can skip ahead to Section 2 (but please read the introduction if you want to comment on the list!) I also add a brief reflection on why I think this is a good approach, referencing a couple of ideas from philosophy of science in Section 3.

Published: Friday, 8 February 2019, 12:22 PM

Tags:

academic, teaching, philosophy

Read the complete article

Would aliens understand lambda calculus?

Unless you are a sci-fi author or some secret government agency, the question whether aliens would understand lambda calculus is probably not your main practical concern. However, the question is intriguing because it nicely vividly formulates a fundamental question about our formal mathematical knowledge. Are mathematical theories and results about them invented, i.e. constructed by humans, or discovered, i.e. are they eternal truths that exist regardless of whether there are humans to know them?

The question makes for a fantastic late night pub debate, but how can we go about answering it using a more serious methodology? Is there a paper one can read to better understand the problem? Occasionally, a talk or an online comment by a computer scientist comments on this question, but way too often, people miss the fact that the nature of mathematical entities is one of the fundamental questions of philosophy of mathematics. Alas, all those discussions are carefully hidden in the humanities department!

I believe that knowing a bit about philosophy of mathematics is important if we want to have a meaningful debate about philosophical questions of mathematics (sic!) and so I did a talk on this very subject at CodeMesh 2017. This article is slightly refined and hopefully more polished version of the talk for those who, like me, prefer reading over watching. Keep in mind that the question about the nature of mathematical entities is one of the fundamental questions of an entire academic discipline. As such, this article cannot possibly cover all the relevant discussions. Compared to some other writings in this space, this article is, at least, based on a couple of philosophical books that, I believe, have useful things to say on the subject!

Published: Tuesday, 22 May 2018, 11:27 AM

Tags:

academic, research, programming languages, philosophy

Read the complete article

The design side of programming language design

The word "design" is often used when talking about programming languages. In fact, it even made it into the name of one of the most prestigious academic programming conferences, Programming Language Design and Implementation (PLDI). Yet, it is almost impossible to come across a paper about programming languages that uses design methods to study its subject. We intuitively feel that "design" is an important aspect of programming languages, but we never found a way to talk about it and instead treat programming languages as mathematical puzzles or as engineering problems.

This is a shame. Applying design thinking, in the sense used in applied arts, can let us talk about, explore and answer important questions about programming languages that are ignored when we limit ourselves to mathematical or engineering methods. I think the programming language community is, perhaps unconsciously, aware of this - one of the reviews of my recent PLDI paper said "this is a nice, novel design paper, and the community often wants more design papers in our conferences". The problem is that we we do not know how to write and evaluate work that follows design methodology.

To better understand how design works, I recently read The Philosophy of Design by Glenn Parsons. The book perhaps did not answer many of my questions about design, but it did give me a number of ideas about what design is, what questions it can explore and how those could be relevant for the study of programming languages...

Published: Tuesday, 12 September 2017, 6:42 PM

Tags:

academic, research, programming languages, philosophy, design

Read the complete article

Papers we Scrutinize: How to critically read papers

As someone who enjoys being at the intersection of the academic world and the world of industry, I'm very happy to see any attempts at bridging this harmful gap. For this reason, it is great to see that more people are interested in reading academic papers and that initiatives like Papers We Love are there to help.

There is one caveat with academic papers though. It is very easy to see academic papers as containing eternal and unquestionable truths, rather than as something that the reader should actively interact with. I recently remarked about this saying that "reading papers" is too passive. I also mentioned one way of doing more than just "reading", which is to write "critical reviews" – something that we recently tried to do at the Salon des Refusés workshop. In this post, I would like to expand my remark.

First of all, it is very easy to miss the context in which papers are written. The life of an academic paper is not complete after it is published. Instead, it continues living its own life – people refer to it in various contexts, give different meanings to entities that appear in the paper and may "love" different parts of the paper than the author. This also means that there are different ways of reading papers. You can try to reconstruct the original historical context, read it according to the current main-stream interpretation or see it as an inspiration for your own ideas.

I suspect that many people, both in academia and outside, read papers without worrying about how they are reading them. You can certainly "do science" or "read papers" without reflecting on the process. That said, I think the philosophical reflection is important if we do not want to get stuck in local maxima.

Published: Wednesday, 12 April 2017, 3:05 PM

Tags:

academic, programming languages, philosophy

Read the complete article

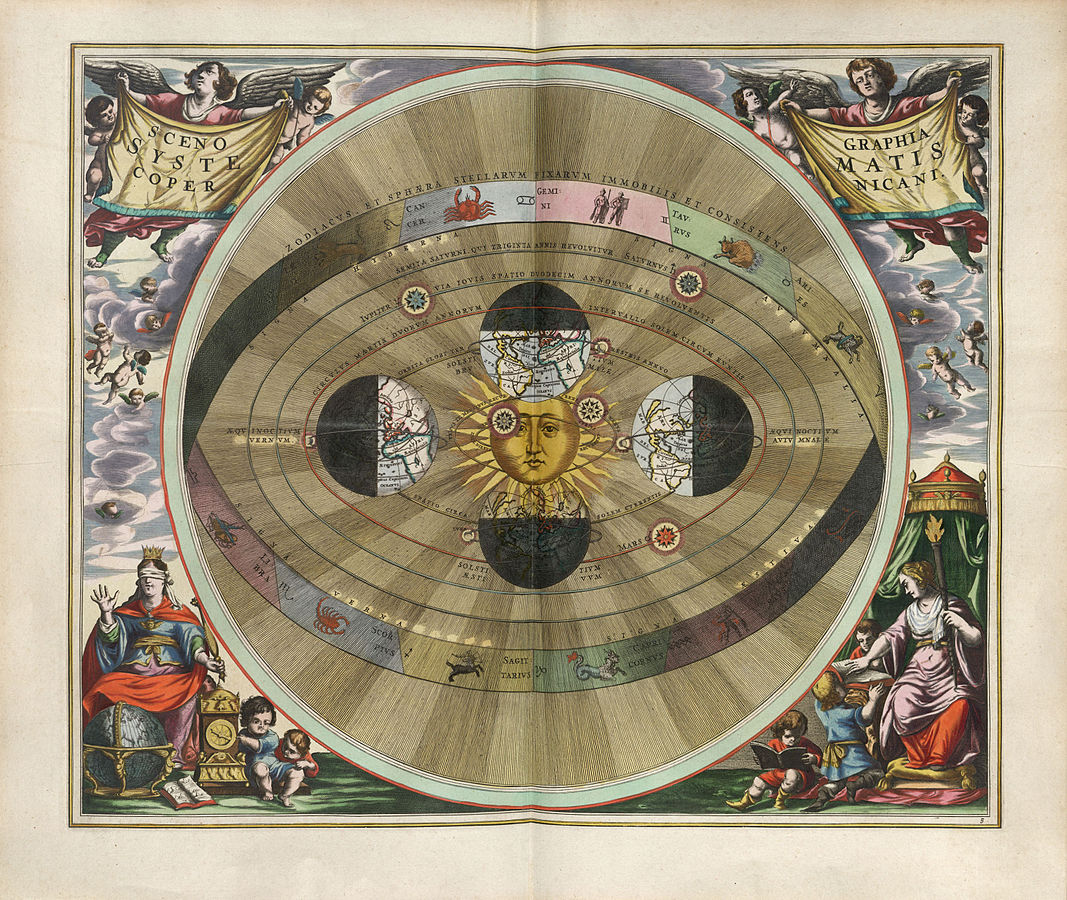

The mythology of programming language ideas

If you read a about the history of science, you will no doubt be astonished by some of the amazing theories that people used to believe. I recently finished reading The Invention of Science by David Wootton, which documents many of them (and is well worth reading, not just because of this!) For example, did you know that if you put garlic on a magnet, the magnet will stop working? Fortunately, you can recover the magnet by smearing goats blood on it. Giambattista della Porta tested this and concluded that it was false, but Alexander Ross argued that our garlic is perhaps not so vigorous as those of ancient Greeks.

You can just laugh at these stories, but they can serve as interesting lessons for any scientist. The lesson, however, is not the obvious one. Academics will sometimes read those stories and use them to argue against something they do not consider scientific - arguing that it is like believing that garlic break magnets.

This is not how the analogy works. What is amazing about the old stories is that the conclusions that now seem funny often had very solid reasoning behind them. If you believed in the basic assumption of the time, then you could reach the same conclusions by following fairly sound reasoning principles. In other words, the amazing theories were scientific and entirely reasonable. The lesson is that what seems a completely reasonable idea now, may turn out to be wrong and quite hilarious in retrospect.

In this article, I will look at a couple of amazing theories that people believed in the past and I will explain why they were reasonable given the way of thinking of the time. Along the way, I will explore some of the ways of thinking that we use today about programming and computer science and why they might appear silly in the future.

Published: Tuesday, 7 March 2017, 4:31 PM

Tags:

academic, programming languages, philosophy

Read the complete article

Thinking the unthinkable: What we cannot think in programming

This blog post is an edited and more accessible version of an article Thinking the unthinkable that I recently presented at the PPIG 2016 conference. The original article (PDF) has proper references and more details; the minimalistic talk slides give a quick summary of the article.

If you find this interesting, you might also be interested in a workshop we are planning. To keep in touch leave a comment on GitHub, ping me at @tomaspetricek or email tomas@tomasp.net!

Our thinking is shaped by basic assumptions that we rarely question. These assumptions exist at different scales. Foucault's episteme describes basic assumptions of an epoch (such as Renaissance); Kuhn's research paradigms determine how scientists of a given discipline approach problems and Lakatos' research programmes provide undisputable assumptions followed by a group of scientists.

In this article, I try to discover some of the hidden assumptions in the area of programming language research. What are assumptions that we never question and that determine how programming languages are designed? And what might the world look like if we based our design method on different basic principles?

Published: Tuesday, 11 October 2016, 6:30 PM

Tags:

philosophy, programming languages

Read the complete article

Philosophical questions about programming

Combining philosophy and computer science might appear a bit odd. The disciplines have very little overlap. Both philosophers and computer scientists get taught formal logic at some point in their undergraduate courses, but that's probably as close as they get.

But the fact that the disciplines do not overlap much might very well be the reason why putting them together is interesting. In an article about Design and Science, Joichi Ito (from MIT Media Lab), describes the term antidisciplinary and nicely summarizes why looking at such unusual combinations is worthwhile:

Interdisciplinary work is when people from different disciplines work together. But antidisciplinary is something very different; it's about working in spaces that simply do not fit into any existing academic discipline.

[When focusing on disciplines, it] takes more and more effort and resources to make a unique contribution. While the space between and beyond the disciplines can be academically risky, it (...) requires fewer resources to try promising, unorthodox approaches; and provides the potential to have tremendous impact (...).

As you can see from some of my earlier blog posts, I think the space between philosophy and computer science is an interesting area. In this article, I'll explain why. Unlike some of the previous posts (about miscomputation, types and philosophy of science), this post is quite broad and does not go into much detail.

At the danger of sounding like a collection of random rants, I look at a number of questions that arise when you look at computer science from the philosophical perspective, but I won't attempt to answer them. You can see this article as a research proposal too - and I hope to write more about some of the questions in the future. I wish antidisciplinary work was more common and I believe looking into such questions could have the tremendous impact that Joichi Ito mentioned.

Published: Thursday, 26 May 2016, 2:33 PM

Tags:

philosophy, programming languages

Read the complete article

Philosophy of science books every computer scientist should read

When I tell my fellow computer scientists or software developers that I'm interested in philosophy of science, they first look a bit confused, then we have a really interesting discussion about it and then they ask me for some interesting books they could read about it. Given that Christmas is just around the corner and some of the readers might still be looking for a good present to get, I thought that now is the perfect time to turn my answer into a blog post!

So, what is philosophy of science about? In summary, it is about trying to better understand science. I'll keep using the word science here, but I think engineering would work equally well. As someone who recently spent a couple of years doing a PhD on programming language theory, I find this extremely important for computer science (and programming). How can we make better programming languages if we do not know what better means? And what do we mean when we talk about very basic concepts like types or programming errors?

Reading about philosophy of science inspired me to write a couple of essays on some of the topics above including What can programming language research learn from the philosophy of science? and two essays that discuss the nature of types in programming languages and also the nature of errors and miscomputations. This blog post lists some of the interesting books that I've read and that influenced my thinking (not just) when writing the aforementioned essays.

Published: Thursday, 10 December 2015, 1:42 PM

Tags:

philosophy, research, talks

Read the complete article

Miscomputation: Learning to live with errors

If trials of three or four simple cases have been made, and are found to agree with the results given by the engine, it is scarcely possible that there can be any error (...).

Charles Babbage, On the mathematical

powers of the calculating engine (1837)

Anybody who has something to do with modern computers will agree that the above statement made by Charles Babbage about the analytical engine is understatement, to say the least.

Computer programs do not always work as expected. There is a complex taxonomy of errors or miscomputations. The taxonomy of possible errors is itself interesting. Syntax errors like missing semicolons are quite obvious and are easy to catch. Logical errors are harder to find, but at least we know that something went wrong. For example, our algorithm does not correctly sort some lists. There are also issues that may or may not be actual errors. For example an algorithm in online store might suggest slightly suspicious products. Finally, we also have concurrency errors that happen very rarely in some very specific scenario.

If Babbage was right, we would just try three or four simple cases and eradicate all errors from our programs, but eliminating errors is not so easy. In retrospect, it is quite interesting to see how long it took early computer engineers to realise that coding (i.e. translating mathematical algorithm to program code) errors are a problem:

Errors in coding were only gradually recognized to be a significant problem: a typical early comment was that of Miller [circa 1949], who wrote that such errors, along with hardware faults, could be "expected, in time, to become infrequent".

Mark Priestley, Science of Operations (2011)

We mostly got rid of hardware faults, but coding errors are still here. Programmers spent over 50 years finding different practical strategies for dealing with them. In this blog post, I want to look at four of the strategies. Quite curiously, there is a very wide range.

Published: Monday, 27 July 2015, 3:15 PM

Tags:

philosophy, research, programming languages

Read the complete article

Against the definition of types

Science is much more 'sloppy' and 'irrational' than its methodological image.

Paul Feyerabend, Against Method (1975)

Programming languages are a fascinating area because they combine computer science (and logic) with many other disciplines including sociology, human computer interaction and things that cannot be scientifically quantified like intuition, taste and (for better or worse) politics.

When we talk about programming languages, we often treat it mainly as scientific discussion seeking some objective truth. This is not surprising - science is surrounded by an aura of perfection and so it is easy to think that focusing on the core scientific essence (and leaving out everything) else is the right way of looking at programming languages.

However this leaves out many things that make programming languages interesting. I believe that one way to fill the missing gap is to look at philosophy of science, which can help us understand how programming language research is done and how it should be done. I wrote about the general idea in a blog post (and essay) last year. Today, I want to talk about one specific topic: What is the meaning of types?

This blog post is a shorter (less philosophical and more to the point) version of an essay that I submitted to Onward! Essays 2015. If you want to get a quick peek at the ideas in the essay, then continue reading here! If you want to read the full essay (or save it for later), you can get the full version from here.

Published: Thursday, 14 May 2015, 4:46 PM

Tags:

philosophy, research

Read the complete article

What can programming language research learn from the philosophy of science?

As someone doing programming language research, I find it really interesting to think about how programming language research is done, how it has been done in the past and how it should be done. This kind of questions are usually asked by philosophy of science, but only a few people have discussed this in the context of computing (or even programming languages).

So, my starting point was to look at the classic works in the general philosophy of science and see which of these could tell us something about programming languages.

I wrote an article about some of these ideas and presented it last week at the second symposium on History and Philosophy of Programming. For me, it was amazing to talk with interesting people working on so many great related ideas! Anyway, now that the paper has been published and I did a talk, I should also share it on my blog:

- What can Programming Language Research Learn from the Philosophy of Science?

- Fairly minimalistic slides from my talk at the symposium

One feedback that I got when I submitted the paper to Onward! Essays last year was that the paper uses a lot of philosophy of science terminology. This was partly the point of the paper, but the feedback inspired me to write a more readable overview in a form of blog post. So, if you want to get a quick peek at some of the ideas, you can also read this short blog (and then perhaps go back to the paper)!

Published: Thursday, 10 April 2014, 6:16 PM

Tags:

research, philosophy

Read the complete article